…with important implications for AI

The mind tries to understand the world in terms of concepts, most of which are dressed in language and in some cases, in mathematics.

But our conceptual understanding of the world suffers from a chicken-and-egg problem: where do the concepts in which this understanding is modeled come from in the first place? How can you build a new theory with old terminology and make sure that it doesn’t suffer from the implicit assumptions inherent in the terminology?

The 18th-century empiricist around Locke, Hume, and Berkeley still exert a strong influence on the way modern science is thought of and thinks of itself. According to empiricism, the world is an objective place waiting to be discovered. Our sensors find real descriptions of this objective reality and express it in the language of concepts and abstraction. But does this really capture the way our brain operates?

Philosophy is the battle against the bewitchment of our intelligence by means of language.

Ludwig Wittgenstein

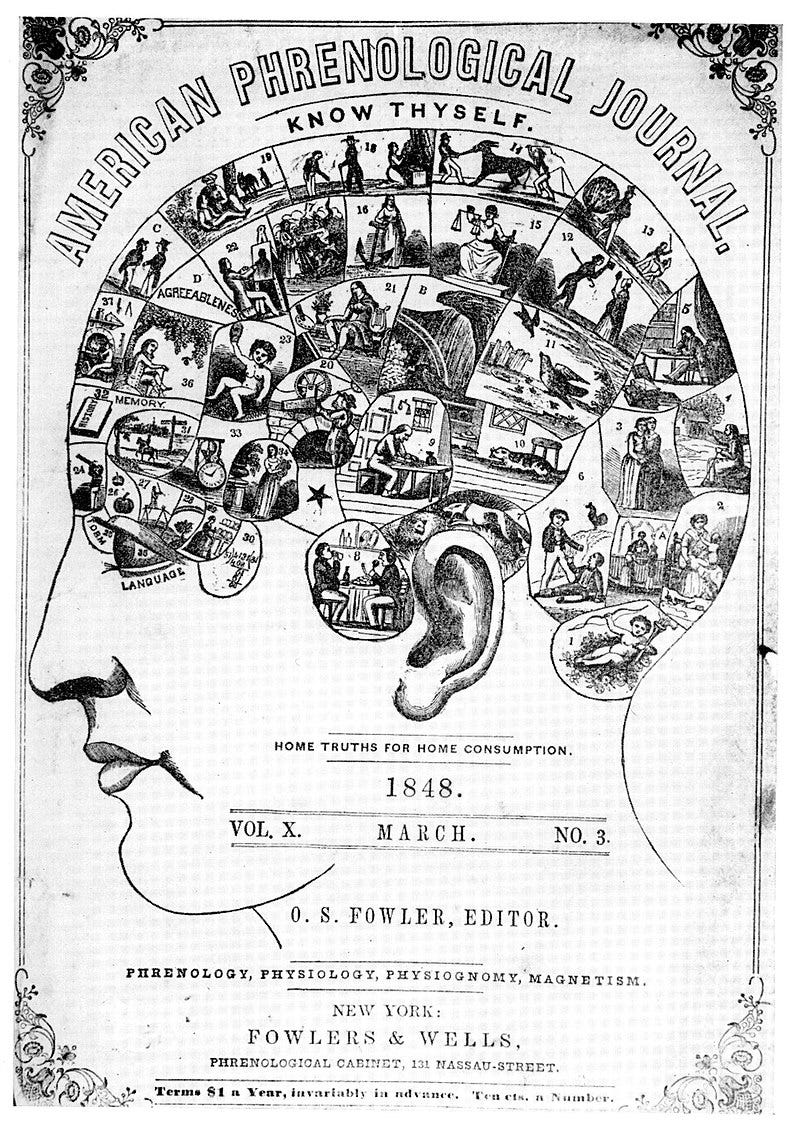

Cognitive Neuroscience is a relatively young field, but much of the terminology it relies on is much older, some of it dating back to ancient Greece. The 19th century, with its pseudoscientific school of phrenology, showed how a similar way of approaching the brain can be risky. Phrenology describes the idea that different brain areas are responsible for different specialized tasks, so one part at the top left takes care of knitting, while on the right bottom part writes poetry (see the figure).

This sounds like pseudoscience to us now, but some consider modern cognitive neuroscience to practice “neo-phrenology” on a different, more subtle level, with many of the most popular concepts in the field like attention, memory, reasoning, or imagination going back to influential 19th century American psychologist William James, if not thinkers far before him. We are still looking for specific functions and algorithmic implementations of concepts in the brain that were invented long before anyone had the slightest clue about how the brain actually works.

This is encapsulated in David Marr’s paradigm that we first think of a function in terms of information processing, then look for an algorithm, and then find the neural implementation of the algorithm that does the job (however, I don’t want to strawman anyone here, so it has to be pointed out that Marr, one of the fathers of computational neuroscience, always emphasized an interaction between all three components, and started his career working at the anatomical level).

Nevertheless, this view is still widely influential in the field and accordingly, we still mostly think of the brain from the outside in: this goes hand in hand with von Neumann’s metaphor of the brain as an information processor. We think of the brain as a computer that processes sensory inputs and acts based on the insights it has gained after computing them through.

But as neuroscientist György Buzsáki argues in his great book The Brain From Inside Out, this view is, to say the least, limited. The rest of this article will explore, based on ideas from Buzsáki’s book, how this view affects neuroscience and AI, how the current view falls short in capturing what the brain is actually doing, and how moving away from it could help us get a better grasp on the brain.

Things won are done; joy’s soul lies in the doing.”

Troilus And Cressida, Act 1, Scene 1

How do inputs actually acquire meaning for us? How do we determine if something we perceive matters to us?

Buzsáki’s answer is quite simple: the only way to bind inputs together in a way that is meaningful to the organism is through action.Actions ground our senses in meaning.The ability of an organism to act on sensory inputs is the sole reason for this organism to develop sensors in the first place. The brain does not care about truth in an objective world, but only about information that helps it satisfy its needs. The brain does not only process and weight evidence, but it actively explores. There is no perception without action, and sensing is more often than not an active process, be it in sniffing, head motion, echolocation, or microsaccades.

Children initially learn about the physics of their body by randomly kicking around, and about the motor skills of their speech by starting off with a nonsensical babble that contains all the syllables the human mouth is capable of producing. They act, and as their behavior is coupled to outcomes in the world through the encouraging feedback of their parents or the painful feedback of kicking their shin against a chair, their actions become grounded in a meaningful way.

Even science can be argued to have only really kicked off (pun intended) with the advance of the experimenter, taking the role of the active manipulator of the world. This is exemplified well by Galileo, throwing objects from the Tower of Pisa to explore the mysteries of gravity.

Accordingly, the speed at which an organism can act determines the relevant timescales for its perception: it’s pointless to know about something when you can’t act on it fast enough, which is precisely what we observe in nature. Trees can’t move, so having saccading eyes to capture their surroundings in real time would be pointless. Our muscle speed is closely related to our cognitive speed, and there is an evolutionary argument to be made that muscle speed was the limiting factor.

Buzsáki claims that there are some fundamental conclusions to be drawn here for neuroscience, challenging what might be called its representation-centric paradigm: instead of asking what a certain neuron or neuronal assembly computes and what this computation represents, we should ask what it does instead.

Addressing this issue is important from a pragmatic perspective as well. The current neuroscientific paradigm shapes the way experiments are designed and carried out each day. In many of them, e.g. in memory research, subjects are usually presented with stimuli, and their neural activity is recorded when processing these stimuli. However, from an inside out perspective, purely recording this neural activity is not enough, because it is not grounded in anything.

It is like recording the words of a lost language to which a Rosetta stone does not exist.

According to Buzsáki, the vocabluary of the brain is made up of internally generated dynamical sequence. Words can be thought of as sequences on the neuronal assembly level. Rather than novel sequences being created during exposure to external stimuli, learning consists of selecting which pre-existing internal sequences need to be recruited that best match the novel experience.The brain doesn’t start out as a blank slate but comes with pre-existing, stable dynamics. These dynamic sequences compose a dictionary filled with initially meaningless words, and learning means creating a context for these words to start meaning.

Without going too deep into the neuroscientific details, one example would be the formation of new memories between the hippocampus and prefrontal cortex (the strong involvement of the hippocampus in memory formation is impressively shown by patients with lesions in the hippocampus displaying a complete inability to form new memories): here the hippocampus serves as a sequence generator and the neocortex learns to pick out a subset of those sequences that provide relevant associations to experience, transforming short term sequences (hippocampus) into long term memories (cortex).

There is an interesting parallel to be drawn here to some concepts in artificial intelligence.

In the early days of AI, the community was focused on a symbol-based approach to building intelligent systems: abstract symbolic representations of the world were hard-coded into computers, teaching them to acquire common sense and reason abstractly about the world. This was an outside-in approach par excellence, and as it turned out, this didn’t work at all, partly because it was impossible to get from abstract reasoning tables to any real-world behavior.

’Tis written: “In the beginning was the Word!”

Here now I’m balked! Who’ll put me in accord?

It is impossible, the Word so high to prize,

I must translate it otherwise (…)

The Spirit’s helping me! I see now what I need

And write assured: In the beginning was the Deed!

– Goethe, Faust

In a similar vein, it is quite likely that starting with the abstraction and moving downwards to the action ultimately falls short when explaining the brain.

Another interesting parallel between AI and the inside-out approach exists in the form of a neural network architecture called reservoir computing. Reservoir computers consist of an input layer, a hidden layer, and an output layer, much like normal recurrent neural networks. But recurrent neural networks are famously hard to train through backpropagation because of something called the missing and exploding gradient problem.

One clever approach to circumvent these gradient problems is to simply not train the hidden layer at all, holding it fixed after random initialization. The reservoir of the model then constitutes a fixed, non-linear dynamical system, containing a wealth of different pre-wired dynamical sequences (you can think of it as a bunch of neurons all talking to each other). The purpose of learning is then not to learn the dynamics from scratch but only to teach the output layer of the network to cleverly match the dynamics already existing in the reservoir to relevant sequences in the data.

Notice the parallel to Buzsáki’s ideas: according to him, the brain already brings an enormous wealth of complex nonlinear dynamics to the table by its pre-wiring and the insane combinatorial wealth of billions of neurons being coupled together in hundreds of trillions of connections. When we learn to act, we don’t need start from zero, but rather have them acquire meaning by matching them to useful outcomes in the real world.

This is also a way of circumventing the issue of catastrophic forgetting frequently faced within neural networks: they tend to destroy their previously learned abilities when learning something new, even if the new task is in principle relatively similar to the old task. From the perspective of the inside-out brain, if learning something new meant learning completely new dynamics, this would destabilize old dynamics, but if it meant integrating newly learned things into pre-existing dynamical patterns, catastrophic forgetting is avoided much more naturally.

Another interesting recent development in deep learning that points in a similar direction is the edge-popup algorithm: this algorithm trains a sufficiently large randomly initialized network not by adjusting weights but by simply removing connections that don’t add anything meaningful to the performance of the network (akin to the Lottery Ticket Hypothesis), effectively uncovering a preconfigured subnetwork performing the network task without any training being needed whatsoever.

It has to be noted that reservoir computing has fallen out of fashion in recent years, and architectures like LSTMs and GRUs are far more popular choices for modeling sequential data. Yet I think ideas from the inside-out approach might still offer some inspiration to the AI and machine learning community. And who knows: similar architectures might celebrate a comeback at some point down the road.

“Strong reasons make strong actions.”

King John, Act 3, Scene 4

Buzsáki goes one step farther, arguing that many of our higher reasoning faculties like thought and cognition can ultimately be viewed as internalized actions.

Memories can be built by events in the world being mapped to pre-existing dynamical patterns that constitute the closest match to their content. Future plans might even be understood as episodic memories of actions played in reverse.

Internalizing actions has presented humans with the great evolutionary advantage of imagining a hypothetical future. Plans playing out in our imagination are hypothetical action sequences that don’t need to be acted out in reality but can instead be tried out in simulated internal representation of the world. This allows adequately complex brains to choose actions based on predictions about their consequences, integrating both past experiences as well as sensory input from the environment. The more complicated the possible outcomes of actions become, the more loops and layers of sophistication the brain adds, but the purpose ultimately remains the same.

Abstract thought might further have arisen as a byproduct of spatial thought (as I go through in more depth in my article on The Geometry of Thought, and as Barbara Tversky argues in her book Mind in Motion), and be related to spatial processing of physical location and navigation in the hippocampus and entorhinal cortex.

This is supported by the fact that the prefrontal cortex (which is usually claimed to be responsible for all the stuff that makes us most human) is built on a similar neural architecture as the motor cortex. While the primary motor cortex projects directly to the spinal cord and muscles, the prefrontal cortex instead projects more indirectly onto brain areas in the limbic system such as the hypothalamus, amygdala, and hippocampus, thereby acting on our higher-order brain areas responsible for emotional regulation rather than directly on our muscles.

“I have always thought the actions of men the best interpreters of their thoughts.”

― John Locke

The brain has become exceedingly good at thinking and making sense of reality. But brains wouldn’t be here if their primary reason for their existence was to compute integrals or to show off with their general knowledge.

This is one of the reasons why I think Buzsáki makes such a convincing case: if we see brains as a large reservoir of dynamics whose primary goal is to be matched to meaningful experience through action, this straightforwardly makes brains actually useful in practice, rooting his theory in evolution.

Our detachment of action and intelligence might be seen as a byproduct of the century old Cartesian divide between body and mind. It is time to overcome this conceptual divide, considering action could really be at the core of intelligence. It remains all the more exciting to observe whether the next breakthroughs in neuroscience and AI really are really going to be action-driven, integrating a stronger inside-out approach into their central concepts.

On a last note, I have to emphasize that I had to omit a lot of interesting details in the scope of this article, and I can only recommend going through Buzsáki’s book in more depth or seeing one of his talks on Youtube (e.g. here or here). I have also recently spoken to him on my podcast, going into many of his ideas (and some of the neuroscience) in more detail.

About the Author

Manuel Brenner studied Physics at the University of Heidelberg and is now pursuing his PhD in Theoretical Neuroscience at the Central Institute for Mental Health in Mannheim at the intersection between AI, Neuroscience and Mental Health. He is head of Content Creation for ACIT, for which he hosts the ACIT Science Podcast. He is interested in music, photography, chess, meditation, cooking, and many other things. Connect with him on LinkedIn at https://www.linkedin.com/in/manuel-brenner-772261191/