Tracing the history of Western thought from Plato, Descartes, and eventually, to science and AI

This series of posts explores the unfathomably complex intellectual landscape connecting the history of philosophy with modern AI. AI is, in some sense, the most recent attempt of humanity at understanding itself and its place within the universe. It’s an attempt at trying to understand impossibly broad questions like: How does language hook onto the world? What is the nature of truth? Of knowledge? How does something represent anything else?

Of course, we cannot mention AI without mentioning knowledge and certainty. Indeed, the Bayesian statistician Dennis Lindley argues that statistics is the study of uncertainty: the axioms of the probability calculus provide the means through which uncertainties can be manipulated and thus applied to real-world problems. But why did we exchange the certainty of logic for the uncertainty of statistics? What was gained and lost?

Parts 2 and 3 of this series draw on philosophy from the Continental tradition and explore areas not typically associated with AI, such as the extent to which our capacity for reasoning depends on our linguistic abilities and participation in a social community. We also touch on the ethical implications of responsibility deriving from our membership in a linguistic community and the challenges this raises for the prospect of ethical AI.

Words, Names, and Power

For millennia, Western thought has grappled with the question of how word and thing, language and reality, are connected. Verse one of the Gospel of John begins:

In the beginning was the Word, and the Word was with God, and the Word was God.

In the Judeo-Christian tradition, God — the creator of the universe — was closely associated with language. In Genesis, after all, God says “Let there be light.” Later, in Genesis 2:20, God presents the animals to Adam in the Garden of Eden, and Adam gives them their names: “Whatever the man called each living creature, that was its name.” The name “Adam” itself was taken from the Hebrew word for earth, adamah, thereby relating the word to the substance used to make the thing.

To speak a name is to somehow communicate an essence. In Exodus 3:13–15, God reveals his name to Moses, “I am who I am.” Yet, enigmatically, this name could not be used to refer to God directly. As a young child you might remember being told not to “take the Lord’s name in vain.” I do, at least. A related phenomenon is how we often use euphemisms such as “darn” instead of “damn,” and “cripes/crap” instead of “Christ.” But why?

As linguist Keith Allan remarks in his book Linguistic Meaning, knowledge of names gives one power over the name-bearer. Several cultures seem to take this idea seriously. Examples include Isis gaining power over the sun god Ra after learning his name, and the evil figure of Rumpelstiltzkin losing his powers once the queen reveals his name. Ancient Greeks would also toss stone tablets containing the names of the gods into the sea to avoid potential blasphemy.

The notion of a password, or code, seems related to this idea of having special power over someone, or access to something special. Alan Turing himself was aware of this. In a 1948 technical report on intelligent machinery, he wrote:

There is a remarkably close parallel between the problems of the physicist and those of the cryptographer. The system on which a message is enciphered corresponds to the laws of the universe, the intercepted messages to the evidence available, the keys for a day or a message to important constants which have to be determined.

Returning to more ancient times, the Book of Judges relates how Gileadite soldiers used the word “shibboleth” to detect and slay enemy Ephraimite soldiers fleeing from battle. Apparently Ephraimites could not pronounce it clearly. Today the word refers to a sign indicating the in or out-group status of its utterer.

Finally, the notion of authorial intent permeates theories of meaning up until the 20th century. On this view, the author of a text (e.g., God as author of the universe), determines the meaning of the objects he creates. If we could somehow communicate with God, he would tell us the meaning of his “text” (the objects in the universe). Access to God would potentially bring with it access to the meaning of the universe, but if we could speak and understand his language. We are lowly, finite creatures, though. We’ll have to discuss theories of interpretation (hermeneutics — Hermes was the Greek messenger god) in a further post. But for now just note the connection between object, symbol, meaning, and text. These ideas play major roles in Continental philosophy.

Essential and Accidental Properties of Objects

The biblical account suggests names are not just randomly given to things, but reflect something deeper. What exactly? Unobservable essences.

Essences are those magical properties that make something what it is. They confer identity to things (we call these things natural kinds). Natural kinds have essential properties which we might use in every possible world to pick out — identify — instances of the kind. All instances of “water” are made up of H20, for example. Accidental properties, in contrast, might only be used in some possible worlds to pick out objects of a kind. So only some instances of the kind “dog” might have floppy ears.

The idea of natural kinds permeates statistical thinking, but is often unacknowledged and unquestioned. Statistical inference in the social sciences, for example, typically proceeds by positing the existence of a theoretical entity known as a population. Some psychological attribute we’re interested in, say extraversion, is selected and it is assumed to be part of the essence of each member of the population (though this assumption is never tested). On the basis of a representative sample of this population (ideally by random selection), inductive generalizations are made about all members of the population. Psychologists, such as Jan Smedslund and Jaan Valsiner, have called this aspect of modern psychological research pseudoempiricism. The researcher does not realize the “empirical” research she is doing actually makes a priori and noncontingent metaphysical assumptions about things like essences. For that reason, Valsiner and others suggest psychology adopt new methods aimed at understanding the unique individual over time, and not the fiction of an idealized population with a shared essence intrinsic to each member.

Identity, Meaning and Reference According to Frege

Logic itself can be seen as the working out of a formal system for description of the universe. The identity of objects will be relative to the description given in that universe. In the case of proper names, logician Gottlob Frege believed that two different descriptions could nevertheless refer (bedeuten) to the same object. For instance ‘the evening star’ and ‘the morning star’ both refer to the planet Venus, but have different meanings (Sinne). In other words,the same object might be referred to under different descriptions.

Propositions (e.g., ‘Snow is white’) only had meaning insofar as they had two references: the True and the False. Axioms, in contrast, only have one referent — the True.This is a small, but important detail that will resurface in a later post comparing myth vs. reason.

Wittgenstein extended this idea in what has been called his “picture theory of language.” The true is what is the case, and the false what is not the case. As Elizabeth Anscombe, Wittgenstein’s student, states in her Introduction to Wittgenstein’s Tractatus:

Every genuine proposition picks out certain existences and non-existences of states of affairs as a range within which the actual existences and non-existences of states of affairs are to fall. Something with the appearance of a proposition but which does not do this cannot really be saying anything. It is not a description of reality.

Identity and Conservation Laws: Connecting Physics and Logic

Now that we’ve gone over the basics of logic, our metaphysical task is to figure out under which conditions changes in these properties preserve identity and which do not. There’s an interesting connection with the conservation laws of physics in which the identity of some thing is conserved (preserved) in time despite appearances to the contrary.

If physics is the search for most basic conservation laws (of, say, energy, angular momentum, and momentum), then conservation laws are equivalent — in the Fregean/Wittgensteinian view — to axioms with only one referent: the True. Bertrand Russell famously said that the conserved quantities in conservation laws are defined in just such a way that they must be conserved. They are therefore mere truisms. They’re simply defined into existence.

If this is so, how are the axioms of physical law any different than the names given by God and Adam to the animals?

I will leave up to you to decide whether this is either the deepest or shallowest form of truth.

Essence of Objects and Meaning of Words

In any case, the ancients believed the names of things are somehow related to essences of things. Indeed, the quoted passages from the Gospel of John and Exodus suggest names are somehow sacred. God, and Adam, didn’t just draw names out of hat. The modern notion of randomness was anathema to our ancestors. There were no blind watchmakers: they believed in an inherent teleology or intentionality to the universe (much later Hegel will apply this insight to logic). To speak a name, a word, was to access an essence. But how does essence relate to meaning?

Essence is to thing as meaning is to linguistic form. Quine, in his influential 1951 article Two Dogmas of Empiricism, saysthings have essences, but only linguistic forms have meanings:

Meaning is what essence becomes when it is divorced from the object of reference and wedded to the word.

This and similar ideas will continue to haunt Western metaphysics as Aristotle’s ideas are translated, with slight modifications over the centuries, into Christian theology via thinkers like St. Augustine, Boethius, and St. Thomas Aquinas.

Foundations of Computing: An Algebra of Logic

This is all nice and dandy, but what do Frege’s and Wittgensteins’s ideas of meaning and reference have to do with computing? Well, with just a few conceptual leaps we can go from logic to logic gates, which are physically realized versions of the ideas we saw above. As George Petzold writes in his book Code, George Boole generalized algebra by divorcing it from the concept of number and wedding it to the notion of a set. Here’s how I think of it.

The algebra of logic is what algebra would be like if restricted to two values, 0 and 1.

The algebraic operator ‘+’ is defined as the union of two sets and the operator ‘*’ is the intersection of two sets. A key insight of Boole’s was realizing the intersection of a set with itself is the set itself (‘X and X = X,’ or ‘X*X=X’… and not X²). Where in algebra is this true? When X is either 0 or 1! Take a moment to let this sink in if it’s not immediately obvious. It wasn’t for me.

Let’s follow Boole further. If we interpret 0 to be the set which nothing belongs (empty set), then 0x = 0 for all x. Likewise, 1x will be identical to x for every x if 1 contains every object we’re considering in our “universe of discourse.” Compare this with ordinary algebra: 0*9 = 0, and 1*9 = 9. So Boole defined the universe of discourse as 1 and the empty set as 0 to reflect the laws of algebra, to essentially allow its symbolic machinery and operations to work on sets of objects. We’ve moved beyond numbers now to objects.

A foundational piece of Western thought relies on Aristotle’s principle of non-contradiction. Nothing can both belong and not belong to a set. In Boole’s formulation it would look something like this: 1 = X + (1-X). In words, the universe of discourse (‘1’) is (‘=’) a set (X) or (‘+’) everything not included in the set (1-X). Similarly, X *(1-X) = 0. In words, a set (X) and everything not in the set (1-X) is (‘=’) is nothing (‘0’). Genius, huh? Fuzzy logicists would dispute this last claim, however. We’ll save it for another day though.

Logic and Statistics

Here’s where the link with uncertainty and statistics comes in. If something is the case (event occurred), it’s 1; if something’s not the case it’s a 0 (event didn’t occur). With this, we can calculate the proportion of times an event occurred (1s) relative to all attempts at getting an event to occur. After all, odds are defined as the probability of an event occurring divided by the probability it doesn’t occur.

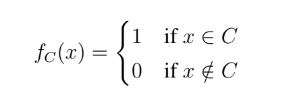

For example, the ML task of concept learning is about placing objects into classes or groups based on whether they possess a given property or attribute. Our learning machine’s goal is to, for some concept C (i.e., a ‘natural kind’ detailed earlier, such as ‘dog’ or ‘cat.’), learn a function (a ‘decision rule’) that places a new instance either into or not into the concept class. The math comes in when we want to prove that no other decision rule can do better.

The beauty of ML is that we don’t need to specify a priori what the necessary and/or sufficient conditions of class membership are. Problematically, though, there might only be a family resemblance among instances of this kind. In that case, the Bayes Error Rate gives us lower bounds on the classification error for any classifier, assuming we were omniscient and knew the classes’ prior distributions. In reality, though, we don’t know this and we often estimate it using our sample.

Another major issue will be that it’s not always clear how to assign an event or observed property to a set, and that’s where fuzzy logic, Hegel, Derrida, and others will play a role.

Ordinary Language and Logic

How does all this relate to ordinary language use, where we express ideas in propositional form? We can connect the idea of set union with an “OR” operation; and set intersection with an “AND” operation, for instance. This makes connections with Frege and natural language clearer. When a proposition is true, we assign it a value of 1; and when false, 0. In other words, when a proposition representing a state of affairs holds, we say it’s true and assign it a value of 1. When a state of affairs doesn’t hold, we say it’s false and assign the proposition representing such state a value of 0.

Frege would later develop Boole’s notation and realize further that statements like “All horses are mammals” can be written equivalently as “For all x, if x is a horse, then it’s a mammal.” Later refinements, such as having variables stand for predicates and propositions and adding existential quantifiers, would make the syntax even more compressed, giving this artificial language vast expressive power for describing the world. A few hundred years earlier, Leibniz had dreamed about such an artificial language (the “universal characteristic”), but never quite managed to work out the details.

From here, it’s only a small jump towards implementing this in a physical system in the form of electrical switches which are either in one of two states: closed (“on” or 1) or open (‘off’ or 0). By building up the complexity of these logic gates, Boolean algebra became physically realizable in a machine. It is an amazing, and in my opinion much under-appreciated, fact that somehow pulses of electricity can be used to represent states of affairs in the world. We discuss issues of representation (particularly from the perspective of Continental philosophy) in Part II of this series.

So How Does Language Hook onto Reality?

Many questions arise at this point: Is language just reality? Does it “mirror” it? If so, if we were to go outside of reality, which language would we use to describe what we saw? Further, what is the basis for the names we use to describe things? Are words merely useful conventions for naming things, or is there some deeper connection lurking beneath the surface? The Gospel of John hints there is. Later philosophers, such as Jacques Derrida and Richard Rorty, will doubt claims that there is one “true” relation between the world and the words we use to describe it.

In the opening of his 1921 book Tractatus Logico Philosophicus, Wittgenstein mystically stated:

What can be said at all can be said clearly, and what we cannot talk about we must pass over in silence.

This suggests logic and language go hand in hand. Logic is the structure of the mind of God and therefore the universe. We assume God is not schizophrenic; there’s only one relation between language and the world. The Bible gives a story for how this relation was defined.

You might also say the goal of science is to figure out the details of the relationship, too. The Enlightenment obsession with taxonomies and encyclopedias was a symptom of the underlying disease of trying to carve nature at its joints. That is, to find the essences, the necessary and sufficient conditions, for all natural kinds.

Wittgenstein’s point is if we are to be able to say anything at all, it must be couched within some language. And the language par excellence is that of logic. It is what connects us to the universe and tells us what is true and false, possible and not possible within it. If we wish to go outside the universe and thus describe in some language how our language is related to the universe, we must be silent because no such metalanguage exists… until Tarski’s model-theory of semantics, which we’ll have to cover in a later post.

About the author

Travis Greene is a Ph.D. candidate at the Institute of Service Science in Taipei, Taiwan, where he studies the philosophical, ethical, and judicial implications of modern data science, machine learning algorithms, and recommender systems.

If you want to follow more of his writing, visit his Medium page Datasophy:

https://medium.com/@datasophy

He previously appeared on the ACIT Science Podcast, discussing how human rights should shape our online lives.